In this week’s newsletter George Siemens questions the use of visuals in communication and refers to a poorly researched article on this:

As I’ve stated, I’m trying to make greater use of visuals. Hard to make sense of the value of visuals with poorly presented articles like this: Why communicate visually. Some sloppy research on the old “10% hear, 20% read, 80% do” – this time attributed to Bruner. Will Thalheimer debunks/questions the validity of this claim. This automatically calls into question related statements in the article (not cited properly) about the prominence of visuals in learning and retention. I don’t trust the author. But then I have to ask myself, why I want to use images/visuals. To increase effectiveness of learners who take a course I teach? To improve my ability to communicate? What can visuals do that text can’t? And where is the research that supports that claim?

Though relevant, I don’t want to address all Siemens’ questions in this post. Instead I spent some time on looking closer into the Will Thalheimer post on one of the major myths in learning, namely that people remember 10% of what they read, 20% of what they see, 30% of what they hear, etc. indicating that “learning by doing” always is the best way of learning.

Apparently the percentages have often been attributed the work of American educator Edgar Dale (1900-1985) and his book “Audiovisual Methods in Teaching” (1946, 1954 & 1969). In this book Dale presented The Cone of Experience, which depicts various types of audio-visual experiences that can be classified in terms of greater or lesser concreteness and abstractness, but it includes no percentages at all! In numerous posts Thalheimer has exposed the misuse/misinterpretations of Dale’s original work – including a fresh example from a conference in the workplace learning field in January 2009.

Back in 2002 Tony Betrus & Al Januszewski gave a presentation at the Association of Educational Communications and Technology (AECT) conference entitled “For the Record: The Misinterpretation of Edgar Dale’s Cone of Experience”. Betrus & Januszewski present 14 examples of misinterpretations – all exposing sloppy research, and as Donald H. Taylor comments:

The very worst of it? Some of these diagrams are produced by people who really should know better. Academic bodies such as North Caroline State University, services for educators such as Video4learning.com, and one individual – working for the good of others – who put a lot of work into producing two different pyramids, with the specific aim of making the diagrams available for free, general use, under creative commons.

A part from the disturbing fact that certain academic researchers continue to misuse and misinterpret Dale’s model and combine it with bogus data, this example raises a fundamental question of what we actually know – based on valid research! – about effective ways of learning. Depending on how you define learning, I think many answers could be given to that question. However, in a recent study on the effectiveness of multimodal learning by Cisco Head of Education, Charles Fadel writes the following in the foreword of the report:

There is a lot of misinformation circulating about the effectiveness of multimodal learning, some of it seemingly fabricated for convenience. As curriculum designers embrace multimedia and technology wholeheartedly, we considered it important to set the record straight, in the interest of the most effective teaching and learning.

This report is the 3rd in a series that addresses “what research say” and it also refers to the many misinterpretations of Dale’s model and concludes:

The person(s) who added percentages to the cone of learning were looking for a silver bullet, a simplistic approach to a complex issue. A closer look now reveals that one size does not fit all learners. As it turns out, doing is not always more efficient than seeing, and seeing is not always more effective than reading. (p.8)

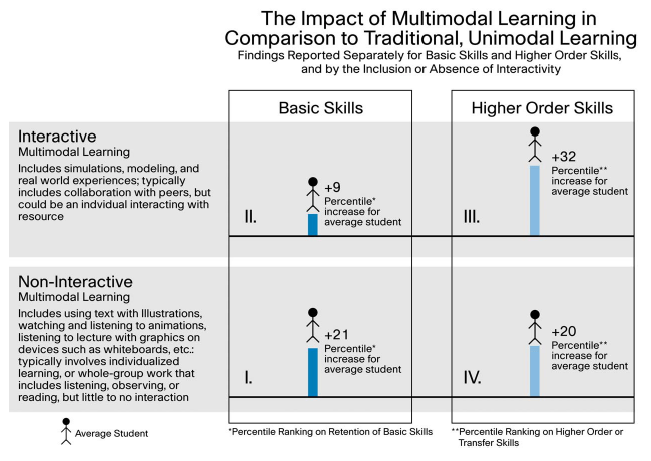

The report then explores the effectiveness on multimodal learning in comparison to traditional learning based on meta-analysis and experimental and quasi-experimental design studies published from 1997 to 2007, and comes up with this interesting figure:

- Quadrants I and II: The average student’s scores on basic skills assessments increase by 21 percentiles when engaged in non-interactive, multimodal learning (includes using text with visuals, text with audio, watching and listening to animations or lectures that effectively use visuals, etc.) in comparison to traditional, single-mode learning. When that situation shifts from non-interactive to interactive, multimedia learning (such as engagement in simulations, modeling, and real-world experiences – most often in collaborative teams or groups), results are not quite as high, with average gains at 9 percentiles. While not statistically significant, these results are still positive. (p. 13)

- Quadrants III and IV: When the average student is engaged in higher-order thinking using multimedia in interactive situations, on average, their percentage ranking on higher-order or transfer skills increases by 32 percentile points over what that student would have accomplished with traditional learning. When the context shifts from interactive to noninteractive multimodal learning, the result is somewhat diminished, but is still significant at 20 percentile points over traditional means. (p.14)

This report actually provides some substantiated evidence of the effectiveness of multimodal learning, but wisely cautions the reader:

This analysis provides a clear rationale for using multimedia in learning. That said, the reader should be cautioned that the research in this field is evolving, with recent articles suggesting that efficacy, motivation, and volition of learners, as well as the type of learning task and the level of instructional scaffolding, can weigh heavily on the learning outcomes from the use of multimedia. (p.14)

It’s an interesting report well worth reading, but you can also watch Charles Fadel discuss it with Elliott Masie at the Learning 2008 conference here.

/Mariis

Special thanks to Carsten Storgaard for the video link :-)

Great article! It’s about time we started addressing issues of the validity of claims for multimedia learning. Claims made on the basis of spurious data or misinterpretations can do untold damage to emerging learning technologies.

Has anyone looked into the efficacy of multimedia learning in specific subject/skills areas? i.e. humanities, sciences, languages, etc.

Thx, Matt. I think most of us involved in multimodal learning regularly struggle with documenting the efficacy. I see that you have a special interest for ESL – not really my area of expertise but in relation to my object of study – the 3D virtual world Second Life – I highly recommend the following two bloggers who explore the potential of using Second Life and other new technologies in ESL teaching and learning:

http://esl-secondlife.blogspot.com/

http://quickshout.blogspot.com/

Btw, your website looks really interesting – I’ll have a closer look when I get back to working full time .. thx for visiting :-)

/Mariis